Blog

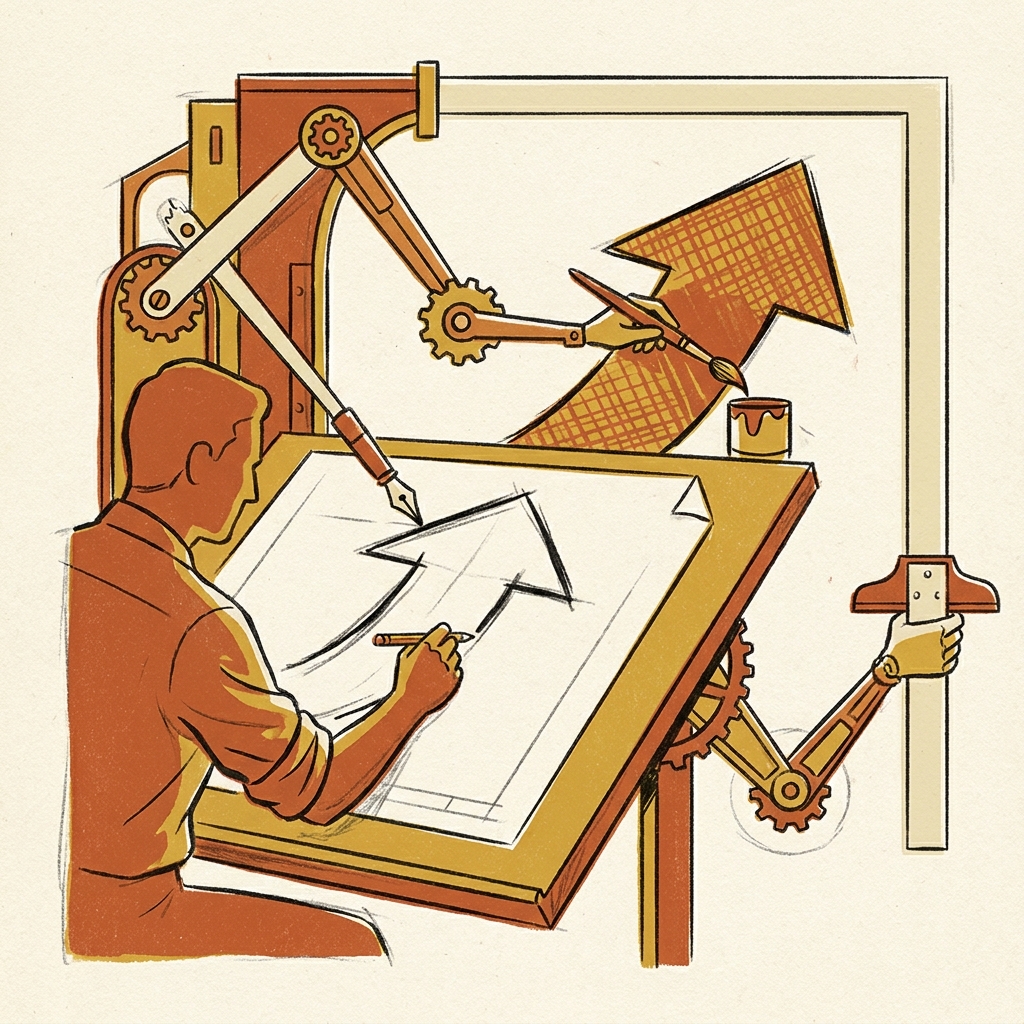

The Third Seismic Shift: Why Agentic Coding Is Rewriting Software Engineering

---

Something happened in February 2026 that didn't get the screaming headlines it deserved. OpenAI quietly disclosed that early versions of GPT-5.3-Codex — their most capable agentic coding model to date — had been used to help train and deploy later versions of itself. A model improving its own successor through practical use, in production. Recursive development, but real.

Meanwhile, MIT Technology Review published a survey of 300 engineering executives and called agentic AI the "third seismic shift" in software engineering this century — ranking it alongside the open-source movement and the DevOps revolution. Half of organisations already call it a top investment priority. Within two years, that figure leaps to over four-fifths.

The arms race between OpenAI's Codex platform and Anthropic's Claude Code has moved so far beyond autocomplete that comparing them on benchmarks almost misses the point. We're watching the unit of work shift from "a code suggestion in your IDE" to "an autonomous agent planning, executing, testing, and shipping multi-file changes in a sandboxed environment."

And the pace is brutal.

Syntax is a solved problem

By early 2026, the frontier moved decisively past the era of LeetCode benchmarks. The new battleground is what researchers call System 2 thinking — the ability of an AI to pause, reflect, and reason through complex multi-step problems before committing to a solution.

Consider the milestones stacking up just in the last few weeks:

GPT-5.3-Codex-Spark, launched February 12, delivers over 1,000 tokens per second on Cerebras' Wafer Scale Engine 3 hardware — roughly 15× faster than the base model. It's designed for real-time, interactive coding. Quick edits, rapid iteration, the kind of flow state where latency kills creativity.

GPT-5.4, released March 5, absorbed Codex's frontier coding capabilities into a general-purpose flagship. The coding specialist and the generalist merged.

Claude Opus 4.6 (February 5) and Sonnet 4.6 (February 17) brought 1M-token context windows at standard pricing, along with Anthropic's Managed Agents in public beta and the compaction API for effectively infinite conversations.

The Codex CLI hit v0.120.0 on April 11 with real-time V2 improvements. Claude Code shipped three releases in two days in mid-April, adding prompt caching TTL controls, session recaps, fullscreen TUI rendering, and push notifications.

OpenAI updated its Agents SDK on April 15, adding an in-distribution harness for frontier models to work with files and approved tools within a workspace.

Google introduced new agentic AI-ready tools for Android developers the very next day.

That's six distinct platform advances in roughly eight weeks. The feature velocity alone should make any engineering leader sit up straight.

From autocomplete to lifecycle management

Here's the part that matters most. The MIT survey found that 51% of software teams are already using agentic AI in at least a limited capacity, and 45% more plan to adopt within 12 months. But the real signal is in the ambition: 41% of organisations aim to have AI agents managing the full product development and software development lifecycles for most or all products within 18 months. That figure rises to 72% within two years.

Let that land. We're not talking about a tool that writes a function faster. We're talking about agents that manage the entire process — from requirements through deployment, monitoring, and iteration.

The open-source community is moving in the same direction. On April 16, a project called Superpowers landed on GitHub Trending, introducing a composable skills framework specifically designed for coding agents. Rather than treating the agent as a monolithic brain, it breaks capabilities into modular building blocks that can be combined and extended — a methodology for agent-driven development rather than just another wrapper around an API.

This is the infrastructure layer emerging in real time. Benchmarks told us which model was cleverest. Frameworks like Superpowers, OpenAI's Agents SDK, and Anthropic's Managed Agents are building the plumbing for a world where agents don't just suggest code — they run projects.

The messy, necessary next chapter

Of course it won't be smooth. The survey respondents flagged compute costs and integration with existing applications as the biggest early challenges. The experts MIT interviewed pointed to a deeper problem: change management. Re-engineering how a team works — their workflows, their trust boundaries, their review processes — to accommodate autonomous agents is genuinely hard. The gains are real: 98% of respondents expect delivery speed to increase, with an average projected acceleration of 37%. But getting there means organisations have to accept a transitional period where agents are powerful enough to cause real damage if ungoverned, yet not yet reliable enough to run unsupervised.

That tension is the defining feature of this particular moment. The tools are ahead of the organisational maturity to absorb them. The models can plan multi-file refactors and execute shell commands, but the average engineering team hasn't yet redesigned its sprint ceremonies, code review culture, or deployment pipelines around the assumption that an agent is doing half the work.

The early gains will be incremental for most — the survey found 52% expect only moderate improvement over two years. But the 9% who think it'll be game-changing are the ones worth watching. They're probably the ones already rethinking their org charts.

---

Software engineering has had two genuine paradigm shifts in the last twenty-five years. Open source democratised access to code. DevOps and agile democratised delivery speed. Agentic AI is democratising execution itself — the ability to go from intent to working software with decreasing human intervention at every step.

The recursive training loop behind GPT-5.3-Codex isn't a gimmick. It's a signal. The systems are starting to improve the systems. The developers who figure out how to work with that loop — setting direction, defining constraints, building the guardrails — will be the ones who compound their impact fastest.

Everyone else will still be arguing about whether AI can write "real" code. The evidence suggests it already is.