Blog

When Coding Agents Stop Waiting for You

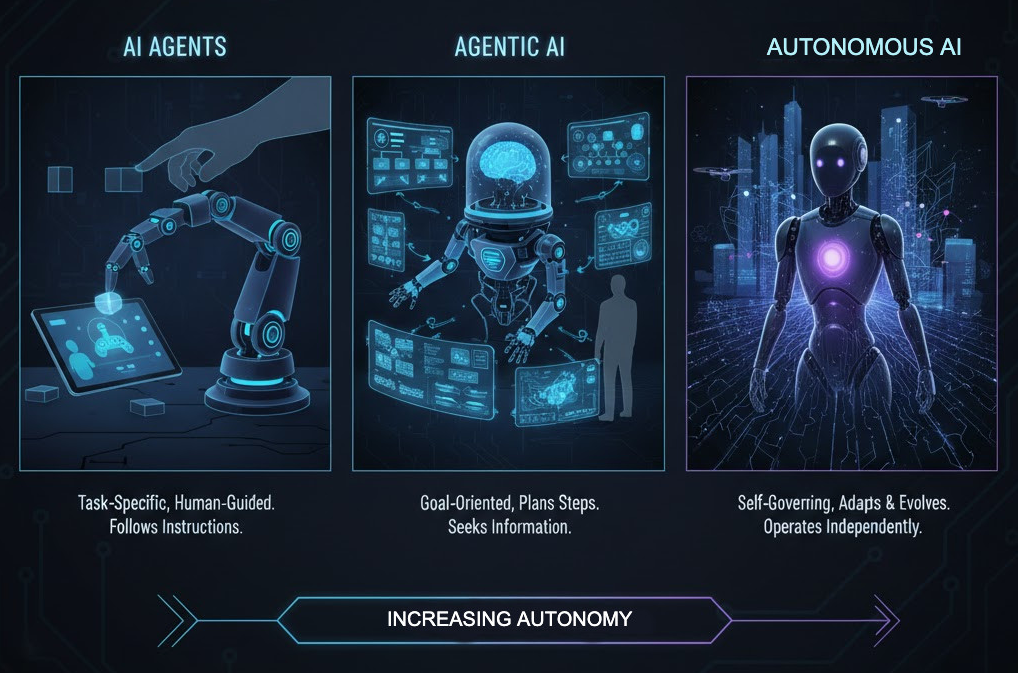

A year ago, “agentic coding” still mostly meant a chat window that could edit a file. You typed a prompt, watched it work, corrected it, and maybe—if you were feeling brave—let it open a pull request.

This week’s news cycle makes that framing feel dated.

Cursor, the agent-powered coding environment, has introduced “Automations”: agents that can launch without you prompting them, triggered by events like a new change landing in the repo, a Slack message, or a timer—then pulling a human in only at the points where judgment is needed (TechCrunch, Mar 5 2026).

GitHub, meanwhile, is telling a story about scale. It says Copilot code review has already delivered 60 million reviews and now accounts for more than one in five code reviews on GitHub, powered by an “agentic architecture” that retrieves repository context and reasons across changes (GitHub Blog, Mar 5 2026).

And reporting in Fortune frames OpenAI’s Codex not as a niche tool but as a general “agent harness” strategy that begins with code: more than 1.6 million weekly active Codex users, more than a million desktop downloads since early February, and an explicit ambition to turn the coding agent into a standard enterprise agent that can be deployed more broadly—once security and managed deployments catch up (Fortune, Mar 4 2026).

Three sources, one shared implication: we’re leaving the era where the tool waits for the developer. We’re entering the era where the developer wakes up to what the tool did.

That’s the narrative turn worth paying attention to. Not because it’s sci‑fi. Because it changes what becomes scarce.

When the agent becomes infrastructure

When an agent is something you “use,” it’s a personal productivity story. You get faster scaffolds, fewer syntax errors, less time searching docs, and more time thinking.

When an agent is something that runs, it stops being a personal tool and starts acting like infrastructure. Infrastructure needs uptime and observability. It needs cost controls. It needs security boundaries that are enforced, not merely requested. It needs a way to fail safely at 3 a.m. when nobody is watching.

Cursor’s Automations are a clean example of that shift. The headline isn’t “more capable agent.” The headline is “the trigger moved.” A Slack message can kick off work. A timer can kick off work. A new commit can kick off work. Once the trigger moves, the unit of work stops being “a developer’s prompt” and becomes “a system reaction.”

GitHub’s code-review numbers point to the same shift from the other side of the pipeline. If AI accelerates code creation, the constraint moves downstream. Reviews become the bottleneck, and review quality becomes the difference between real velocity and chaos.

GitHub’s own framing is telling: it emphasizes “high-signal” feedback, and it’s willing to trade a bit of latency for more reliable judgment. That isn’t the language of autocomplete. That’s the language of a production service designed to keep quality stable under load.

The Codex story completes the triangle. If the harness is the product, software engineering becomes the beachhead domain: it already has formal artifacts (files, diffs, tests, CI), permissions, and a culture of auditability. Once the harness works there, it’s easy to imagine it being repurposed for other enterprise workflows.

Trust is the new delivery velocity

Here’s the uncomfortable truth: code is getting cheaper, but trust is getting more expensive.

TechCrunch’s reporting calls out the new limiter directly: when a single engineer can “oversee dozens of coding agents,” you don’t run out of compute first—you run out of attention. That attention is then spent in the least glamorous place: understanding diffs, validating behavior, and preventing plausible-looking mistakes from entering production.

GitHub’s response is revealing. The breakout metric isn’t “how many lines were generated.” It’s “how many reviews happened,” and whether those reviews were helpful enough to move pull requests forward. In a world where diffs can be produced endlessly, what matters is how quickly you can decide, with confidence, that a change is safe.

So what should teams do—concretely—if they want the upside of always-on agents without becoming a factory that ships defects faster?

Start by treating agent output like production output. Don’t ask, “Does it compile?” Ask, “Do we understand what changed and why?” If an agent can write convincing pull request descriptions, it can also write convincing nonsense. Human accountability doesn’t go away; it becomes more important.

Next, invest in cheap verification. The only scalable way to supervise more agent work is to make correctness cheaper to check than incorrectness is to explain. That means tests, yes, but also hard constraints in CI that turn whole classes of errors into immediate failures. When verification is cheap and automated, you can safely let more work enter the conveyor belt.

Then get serious about boundaries. The moment an agent can run on a timer, it can also run at the wrong time. The moment it can be triggered by Slack, it can also be triggered by the wrong person. Permissions, secrets handling, and audit logs stop being “security concerns” and become core workflow design. If you can’t reconstruct what the agent did, when it did it, and what it was allowed to touch, you don’t have automation; you have liability.

Finally, update the job description. “Software engineer” is quietly acquiring a second role: operator of a system that produces diffs continuously. The best engineers will still write code. They’ll just spend more time steering, reviewing, and defining the guardrails that keep velocity honest.

The agents aren’t replacing developers.

They’re replacing the idea that development starts when a human asks nicely—and that the work is done when the code is written.